|

12/20/2023 0 Comments Airflow scheduler failover controller

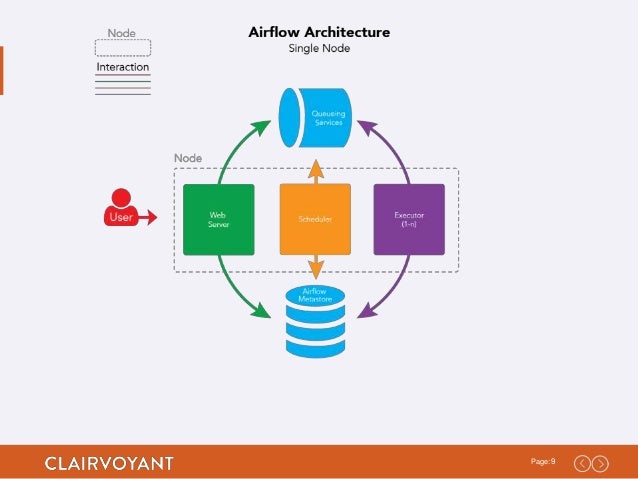

Follow the instructions in $/scripts/systemd/README.md to set it up. So remove the Retry and RestartSec section in the default SystemD file. Note: It is also recommended to diable the automatic restart of the Scheduler process in the SystemD file. Go to and follow the instructions in the README file to get it setup. It is recommended that you setup the airflow-scheduler, at least, for systemd. Startup the Airflow Scheduler Failover Controller on each node you would like acting as the Scheduler Failover Controller (ONE AT A TIME). View the metadata to ensure things are being set correctly scheduler_failover_controller metadata Airflow provides scripts to help you control the airflow daemons through the systemctl command. The former is the Web UI that is used to manage. This can be overridden in the configuration file. In a typical use case, Airflow needs two components that must be constantly running, webserver and scheduler. See the above section entitled "Startup/Status/Shutdown Instructions" View the logs to ensure things are running correctly Location of the logs can be determined by the 'logging_dir' configuration entry in the airflow.cfg Note: Logs are set by default to rotate at midnight and only keep 7 days worth of backups. workers (If you're using the CeleryExecutor) nohup airflow worker $* > ~/airflow/logs/celery.logs & Startup the Airflow Scheduler Failover Controller on each node you would like acting as the Scheduler Failover Controller (ONE AT A TIME). webserver nohup airflow webserver $* > ~/airflow/logs/webserver.logs &ī. failover by increasing the number of controllers in your site. Add the public key content to the ~/.ssh/authorized_keys file on all the other machines Run the following CLI command to test the connection to all the machines that will act as Schedulers scheduler_failover_controller test_connection Startup the following Airflow Daemons a. It offers features like Distributed Resource Scheduler, vMotion, high availability. Create a public and private key SSH key on all of the machines you want to act as schedulers. See the Configurations Section bellow for more details Enable all the machines to be able to ssh to each of the other machines with the user you're running airflow as a. The Airflow scheduler was the only SPOF(single-point-of-failure) before and there have been many detours to keep scheduler running in a disaster situation. Main ones include updating: scheduler_nodes_in_cluster, alert_to_email b. The Airflow scheduler executes your tasks on an array of workers while following the specified dependencies. Use Airflow to author workflows as Directed Acyclic Graphs (DAGs) of tasks. Update the default configurations that were added to the bottom of the airflow.cfg file under the section a. Airflow is a platform to programmatically author, schedule and monitor workflows. Install the ASFC on all the desired machines See the above section entitled "Installation" Run the following CLI command to get the default configurations setup in airflow.cfg scheduler_failover_controller init Ensure that the base_url value under in airflow.cfg is set to the Airflow webserver. This is a step by step set of instructions you can take to get up and running with the scheduler_failover_controller.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed